Automation, Augmentation, and Vice Versa.

The Reconfiguration of Work That Doesn't Look Like Either

Written by Marihum Pernia

The Error of the Primary Sensor

The labor market is an instrument panel, not a single gauge. Yet public debate about AI and work has latched onto one metric—unemployment—as if it were the whole story. This is a familiar mistake. Measures of job offshorability, robot adoption, and trade exposure each produced literatures that were either too blunt to detect early structural change or that disagreed not on minor magnitudes but on the sign of effects. The lesson is not that measurement is futile. It is that measurement must be matched to the phase of a shock—and unemployment is a lagging indicator of reconfiguration.

This essay argues that the AI-driven transformation of labor is already underway, but it is legible only if we read the right instruments. Two early signals—one from the market, one from measurement—converge on a finding that the dominant narrative misses: the first disruption is not mass unemployment but a quiet contraction of entry-level pathways into cognitive work. And the trajectory from here is not determined by the technology itself but by an organizational fork—a design choice between deploying AI as augmentation that preserves human judgment, or as automation that eliminates the pipeline by which judgment is formed.

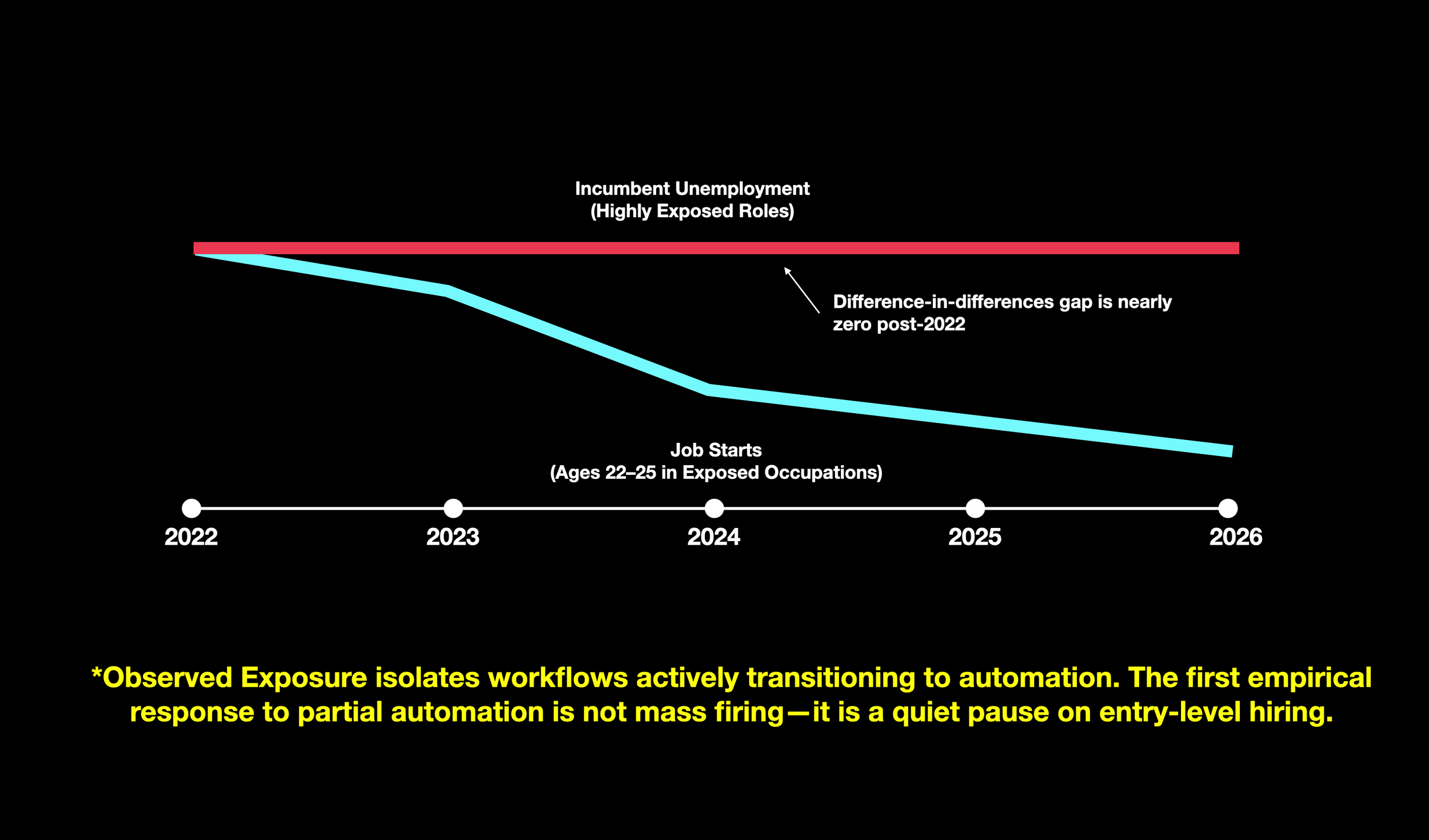

This is not replacement in the conventional sense: a worker displaced by a machine. Nor is it augmentation as typically described: a worker made faster by a tool. It is something more structural—a reconfiguration of how work is organized, how expertise is transmitted, and how the labor market's reproductive capacity functions. Reconfiguration names the third possibility: the transformation of staffing pyramids, entry pathways, and skill-formation systems that can occur even when aggregate employment statistics remain stable. The harm is not a spike on a dashboard. It is a slow mutation of the system's capacity to replenish the judgment, expertise, and institutional knowledge on which the economy depends. The evidence is already visible in the data. Massenkoff and McCrory (2026) find that aggregate unemployment among highly exposed workers has remained flat since late 2022—the difference-in-differences gap between the most exposed and unexposed groups is small and statistically indistinguishable from zero. But the more revealing movement is upstream. For young workers aged 22–25, entry into exposed occupations slows relative to entry into unexposed occupations. The mechanism is not a spike in separations; it is a decline in job starts. Such a decline can be hidden in standard unemployment series because many labor-market entrants do not yet appear as unemployed—they may return to school, take different jobs, or exit the labor force entirely (Massenkoff & McCrory, 2026).

At the same time, the market is not waiting for labor statistics to catch up. A growing class of AI-first businesses is targeting the gap between an output that can be specified and a process that is still labor-intensive. Their wedge is not better software features but a different procurement contract: "We will do the work." Two signals—the market shift toward outcome-selling and the measurement gap between theoretical and observed AI exposure—make the reconfiguration visible. Together, they imply a different set of questions. Not "Will AI cause mass unemployment?" but: Where is work being repackaged into outcomes? Where is deployment deepening from assistance into automation? And where will the first harms appear—in layoffs, or in the quieter collapse of entry-level pathways?

The argument unfolds in five moves. First, we examine the market logic of AI-first services and why outcome-selling is an early indicator of labor substitution pressure. Second, we lay out the observed-exposure framework and what the early evidence does and does not show. Third, we identify diagnostic sectors where the effects should become legible first. Fourth, we introduce co-intelligence as a framework for preserving human capability in the presence of expanding delegation. Finally, we map plausible futures—ranging from augmentation-led productivity to structural pipeline crisis—and identify the indicators that would falsify each scenario.

Signal 1: AI-First Services and the Re-Pricing of Work

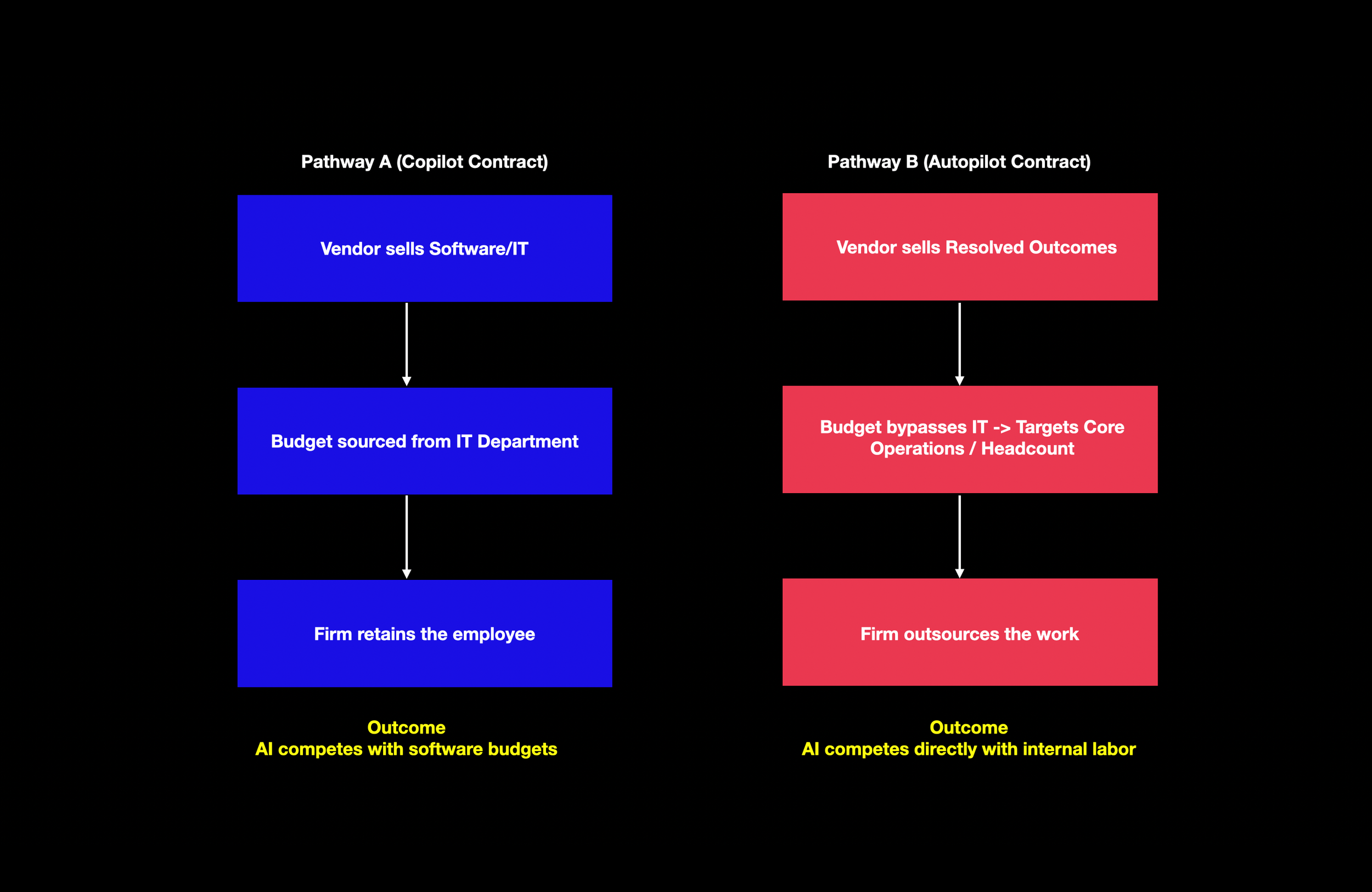

A technological capability becomes economically consequential only when it is bundled into a product that buyers can purchase and organizations can operationalize. For most of the software era, that product form has been the tool: a system sold to a user, embedded in a department, and governed by internal adoption. The implicit wager of the “copilot" paradigm is that AI will be purchased like other software—licensed, trained on, integrated into routines—and that the professional remains the accountable agent.

The -AI-first services- thesis proposes a different product form: not a tool but an outcome. In the most compressed version of the claim, the next wave of AI businesses will not primarily sell software that makes workers faster; they will sell work itself, delivered by an AI-centric operation. The distinction is not semantic. It changes what is purchased, where the budget sits, and—crucially—where labor substitution pressure is felt.

To keep the argument empirical rather than rhetorical, it helps to define terms at the level of contract. A copilot contract sells assistance. The buyer retains the obligation to assemble inputs, provide context, supervise quality, and sign off on outcomes. Labor is complemented because the organization still employs the worker and buys a tool to make them more productive. An autopilot contract sells an output. The buyer purchases a completed unit of work—an answered ticket, a reconciled document bundle, a draft artifact, a processed transaction—typically with an SLA-like promise. The operational responsibility shifts outward: from the buyer's headcount to the vendor's process. Labor is now competed with because the economic comparison is no longer “tool versus tool" but “vendor outcome versus internal staffing."

A useful cross-check is that this “do the job" model is precisely where several investors expect AI-enabled services to scale first: outsourced, process-like work with objective outcomes, rather than brand-heavy advisory work where credibility and liability remain central (Firstminute Capital, 2024). Emergence Capital frames -AI-native services- as a distinct operating model whose viability hinges on whether delivery costs can scale non-linearly as automation improves (Saper et al., 2025).

This difference is what makes AI-first services a market signal rather than a technological claim. Organizations increasingly treat certain categories of work as procurable outputs rather than as roles. That shift can occur even when AI deployment inside firms remains shallow. It is therefore an early indicator: a change in the market boundary of the firm that can precede, and eventually drive, changes in hiring.

What makes this signal more than a conceptual reframing is that it is increasingly legible in capital allocation. A March 2026 investor and operator report argues that the convergence of AI automation and buy-and-build dealmaking has already created a distinct startup category, attracting over $3 billion in committed capital since late 2023. It identifies 16 companies with 2024–2025 funding activity in the US and Europe that match its working definition of an AI-first roll-up (AI-First Services Roll-Ups Report, 2026). The substance of the model is not subtle. The thesis is financialized: acquire services businesses at approximately 5–8× EBITDA, deploy AI to lift gross margins from roughly 10–20% toward 40–65%, and then command technology-like multiples on exit. The core wager is multiple arbitrage powered by automation (AI-First Services Roll-Ups Report, 2026). The market signal is being institutionalized as a creation model—General Catalyst formalizes the sequence as a pipeline: incubate, prove automation, acquire, scale.

Concrete cases sharpen what -autopilot- means in practice. Crescendo charges by resolved outcome rather than by headcount, claiming gross margins in the 60–65% range—about four times an industry baseline around 15%. Dwelly, in European property management, explicitly rejects the SaaS wedge in favor of acquiring agencies to capture the full P&L and consolidating operations onto a unified technology stack (AI-First Services Roll-Ups Report, 2026).

For builders and investors, three implications follow. First, the wedge matters because it determines which budget you compete for (software versus labor). Second, operational trust becomes product: an autopilot seller is accountable for outcomes, not just model performance. Third, moats shift from distribution to operations: owning the workflow creates first-party operational data and faster iteration loops that compound with scale (AI-First Services Roll-Ups Report, 2026).

-Autopilot- is not synonymous with full automation. In practice, an AI-first service can be a hybrid pipeline in which AI compresses some tasks while humans handle exceptions. What is novel is not that humans remain present but that they are repositioned: fewer people are required per unit of output, and their work shifts from production to supervision, from execution to exception-handling.

Yet this signal should not be romanticized. AI-first services can be a vehicle for genuine automation, but they can also be a vehicle for automation theater—what Emergence calls Mirage PMF, where revenue and retention obscure whether AI is actually improving unit economics (Saper et al., 2025). The question is not whether autopilot services will exist—they already do. The question is whether their economics will scale non-linearly or remain tethered to human labor. This is the hinge between augmentation and replacement as social outcomes.

Even under cautious assumptions, the signal matters for one reason: it changes the incentives that govern deployment. In a copilot world, AI adoption competes with organizational inertia. In an autopilot world, AI adoption can bypass inertia by bypassing the organization. That bypass is a short path from capability to substitution pressure.

This outcome-selling shift is itself a reconfiguration mechanism. It does not necessarily eliminate workers in the traditional sense—it reorganizes the boundary between firm and market, changes who is accountable for the output, and alters the staffing logic that determines whether junior roles exist at all. The reconfiguration can be underway even when no one has been fired.

Signal 2: Observed Exposure and the Hidden Disruption

The second signal begins with a deceptively simple distinction: what AI is capable of in principle is not what the economy is deploying in practice. Much of the early literature on AI and labor proceeds by ranking occupations by theoretical exposure. But theoretical exposure is an upper bound. It is a map of what might be possible, not an estimate of what is happening.

Massenkoff and McCrory (2026) propose a method designed for precisely this gap. Their -observed exposure- measure combines three ingredients: O*NET task descriptions (what workers do), a task-level measure of theoretical LLM capability (β), and real usage data drawn from the Anthropic Economic Index (what users are doing with an LLM in practice). They adjust task coverage to distinguish between automated and augmentative usage: automated implementations receive full weight, while augmentative use receives half weight.

This architecture matters because it operationalizes what is otherwise left as intuition: automation is more likely to move labor demand than augmentation, and work-related usage is more predictive than consumer experimentation. The method produces three early findings that are jointly important.

First, there is a large deployment gap. In Computer and Math occupations, theoretical exposure covers the vast majority of tasks, while observed exposure covers only a fraction. The implication is not that AI is weak; it is that the limiting factor is often diffusion, tooling, verification, legal constraint, or workflow redesign (Massenkoff & McCrory, 2026). Second, observed exposure is concentrated in specific occupations whose leading automated tasks are legible: software maintenance for programmers, customer interaction for service representatives, document-to-system transcription for data entry roles. This is not merely a ranking; it is a hint about where the first measurable automation workflows are already appearing (Massenkoff & McCrory, 2026). Third, and most consequential, the early employment signal is not unemployment but entry. The unemployment series for highly exposed and unexposed groups move largely in parallel. But when Massenkoff and McCrory turn to young workers (ages 22–25) and measure job starts, they find suggestive evidence of a decline in entry into high-exposure occupations. The magnitude is tentative, barely statistically significant, and subject to alternative interpretations. Yet it is precisely the signal one would expect if the first response to partial automation is hiring hesitation rather than separation (Massenkoff & McCrory, 2026).

If observed exposure captures the subset of tasks being automated in work-related settings, then firms may react not by firing incumbents but by pausing the creation of new roles whose task bundles are increasingly automatable. The harm channel is less a visible wave of unemployment than a quiet contraction of entry-level opportunities.

The distributional detail reinforces why this matters. The workers in the top quartile of exposure were, on average, higher-paid and more educated than unexposed workers, and more likely to be female. If entry into these occupations slows, the consequence is a disruption to the pipeline by which educated cohorts enter high-wage cognitive work and organizations replenish the ranks that later become managers, experts, and judgment-holders (Massenkoff & McCrory, 2026).

This is what reconfiguration looks like in the data: not a displacement event but a structural reshaping of who enters, how they are trained, and whether the pipeline that produces expertise remains intact. No one is fired. No one is made faster. The system itself is mutating.

The observed-exposure framework also bridges back to the first signal. Outcome-selling AI-first services are most viable precisely where observed exposure is already high. The market signal and the measurement signal cohere: the same workflows that raise observed exposure are the ones that can be packaged into autopilot products.

The Governing Fork: From Signals to Futures

The two signals converge on a single structural observation: the early phase of AI-driven labor transformation is already legible, but not where most people are looking.

But neither signal determines what happens next. The same AI capability that enables an autopilot service to replace a team of junior analysts can also be deployed as a copilot that makes those analysts faster, more accurate, and more valuable. The difference is not in the model; it is in the operating model. It is in the contract form, the workflow design, the governance structure, and the staffing logic.

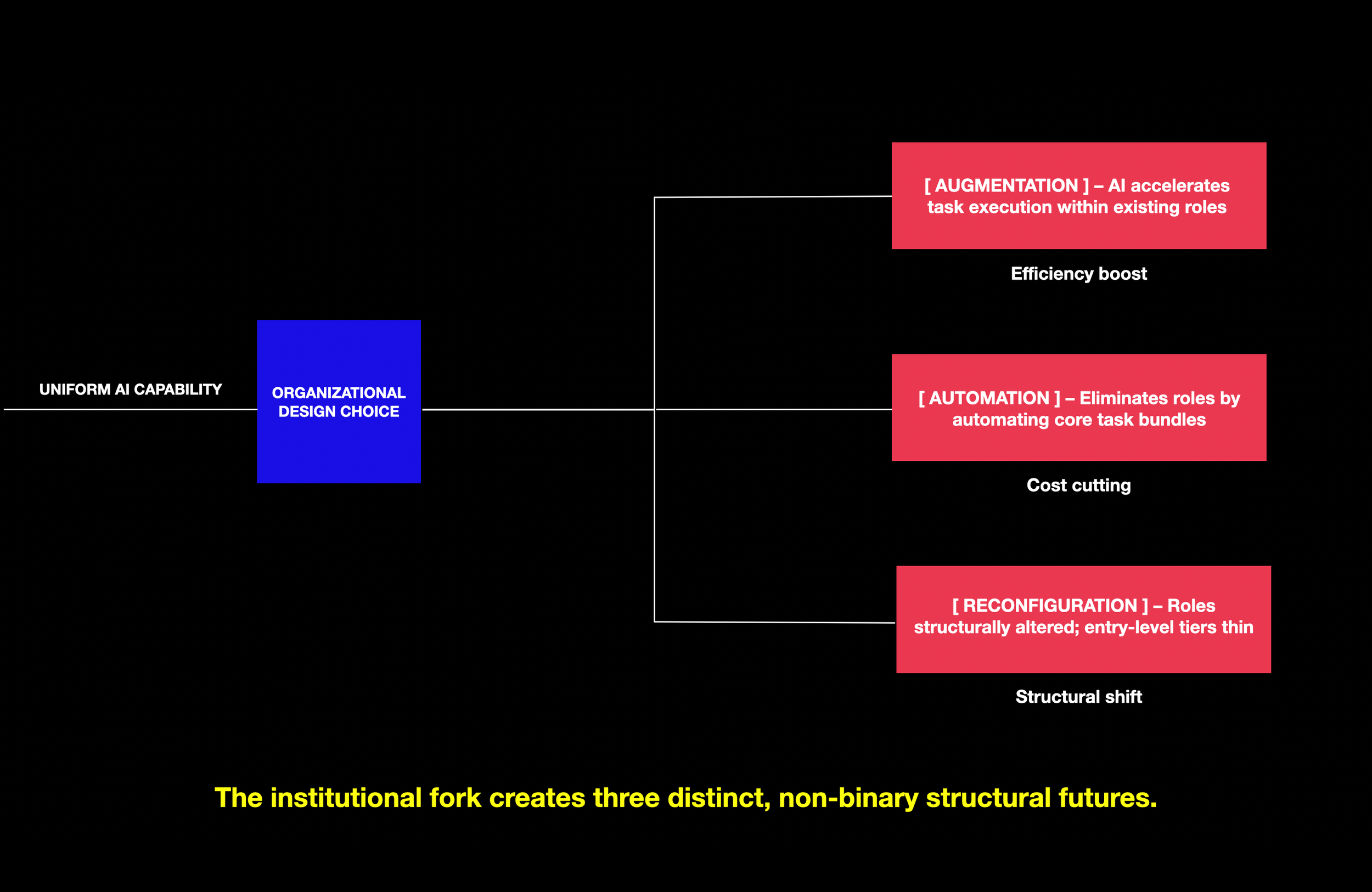

This is the fork that governs which futures are plausible. It is not a technological fork—the models are the same on both branches. It is an organizational and institutional fork, determined by how firms choose to deploy, how markets choose to price, and how regulators choose to constrain. But the fork is not binary. It produces three distinct outcomes, not two.

Augmentation preserves the human as the accountable agent; AI accelerates task execution within existing roles. Headcount may hold or grow; skill requirements shift but the pipeline remains. Replacement eliminates roles by automating their core task bundles; headcount falls; the pipeline is severed. Reconfiguration is the third and most likely near-term outcome: roles are not eliminated outright but structurally altered—entry-level tiers thin, staffing pyramids compress, the boundary between firm and market shifts, and the system by which expertise is formed quietly degrades. Reconfiguration can occur even while employment statistics remain stable. It is the hardest of the three to detect, and therefore the most dangerous to ignore.

The entry-level squeeze documented in Signal 2 is the early expression of reconfiguration. In organizations that deploy AI as augmentation—redesigning junior roles around supervised AI assistance—the squeeze may stabilize. In organizations that deploy AI as automation—packaging task bundles into vendor outcomes, compressing staffing pyramids—the squeeze deepens into replacement. But in a large number of organizations, the outcome will be neither clean augmentation nor outright replacement. It will be reconfiguration: fewer entry-level hires, a shift toward supervision and exception-handling, a pipeline that narrows without closing, and a talent shortage that manifests years later.

If the automation branch dominates, the aggregate effect is a labor market in which experienced incumbents retain their positions while the pipeline that would produce their replacements thins. This is not mass unemployment. It is something quieter: a slow erosion of the system's capacity to replenish judgment, expertise, and institutional knowledge.

The governing fork is therefore not a prediction. It is a design problem. It asks: given that augmentation, replacement, and reconfiguration are all technically possible, what determines which outcome organizations produce? And it points to levers—workflow design, contract structure, governance architecture, regulatory constraint, and educational response—that are, in principle, within the reach of deliberate choice.

Diagnostic Sectors: Where the Labor Story Will Become Legible First

A note on generalizability. The observed-exposure framework is portable in principle, but the current estimates are anchored in US occupational taxonomies, labor-market outcomes, and institutional projections. For a European reader, the correct move is not to import the magnitudes but to import the mechanism and the indicator logic (Massenkoff & McCrory, 2026). The mechanism is: capability is not impact; deployment is impact. Early labor-market effects may appear first in entry and hiring rather than in unemployment.

Selection Criteria

The five sectors were chosen against four criteria: deployment rather than demos, outcome-compatibility, traceability, and pipeline sensitivity.

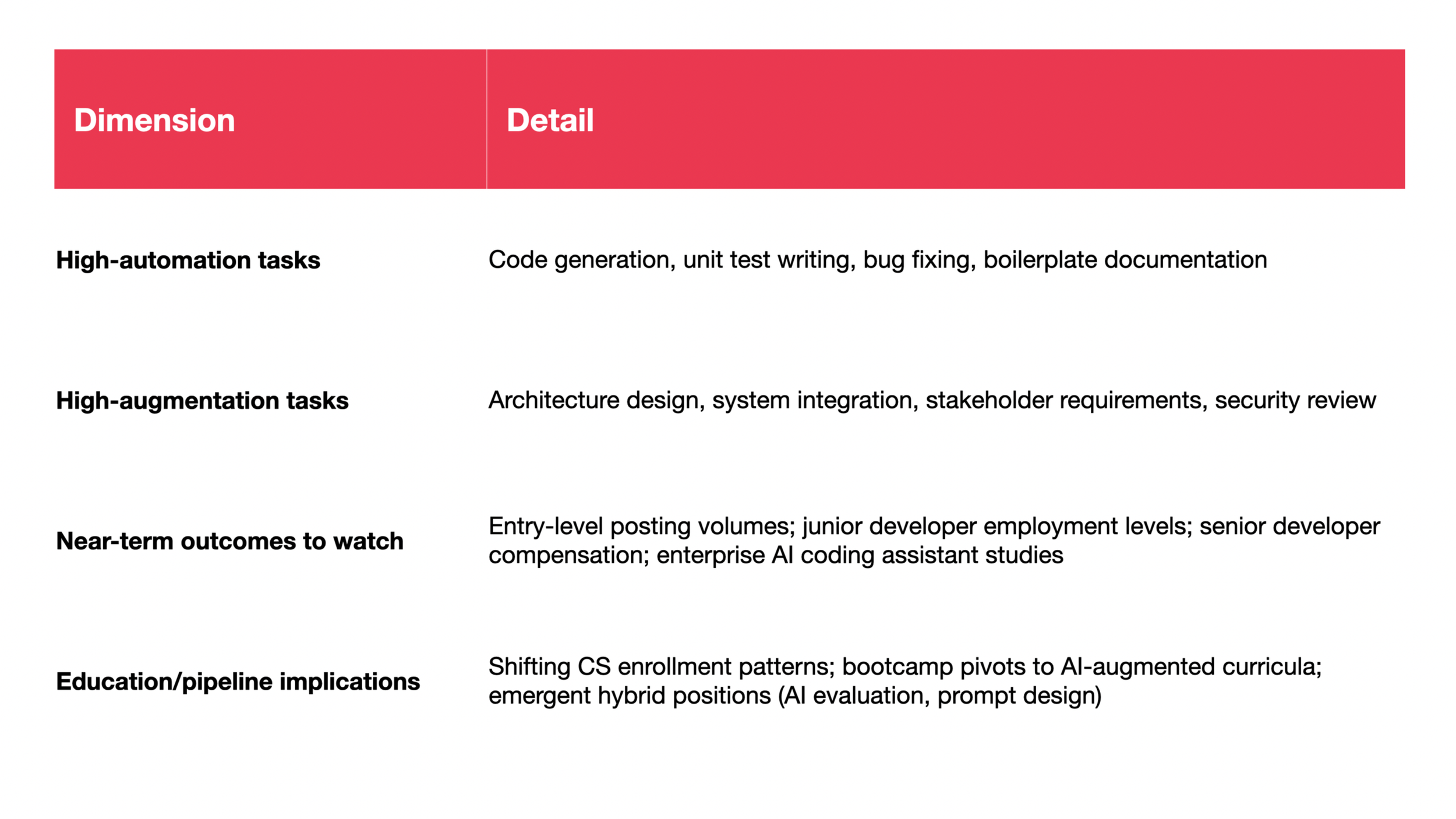

Sector 1: Software Engineering and Computer Programming

Software engineering ranks among the most highly exposed occupations (Massenkoff & McCrory, 2026). The fork here is sharp: organizations that treat AI as a productivity multiplier for junior developers preserve the training pipeline; those that use AI-generated code to reduce junior headcount eliminate it. Reconfiguration in this sector would look like stable overall employment alongside a hollowed-out junior tier—senior developers working with AI, but no pathway producing the next generation of seniors.

Sector 2: Customer Service

Customer service has a large employment base and an automation-dominant use pattern (Massenkoff & McCrory, 2026). The clean split between automatable tier-1 tasks and non-automatable complex escalations makes it the most transparent test case for autopilot contracts. Reconfiguration here would manifest as a shrinking tier-1 workforce with a small, highly skilled escalation layer—fewer total jobs, but no dramatic layoff event.

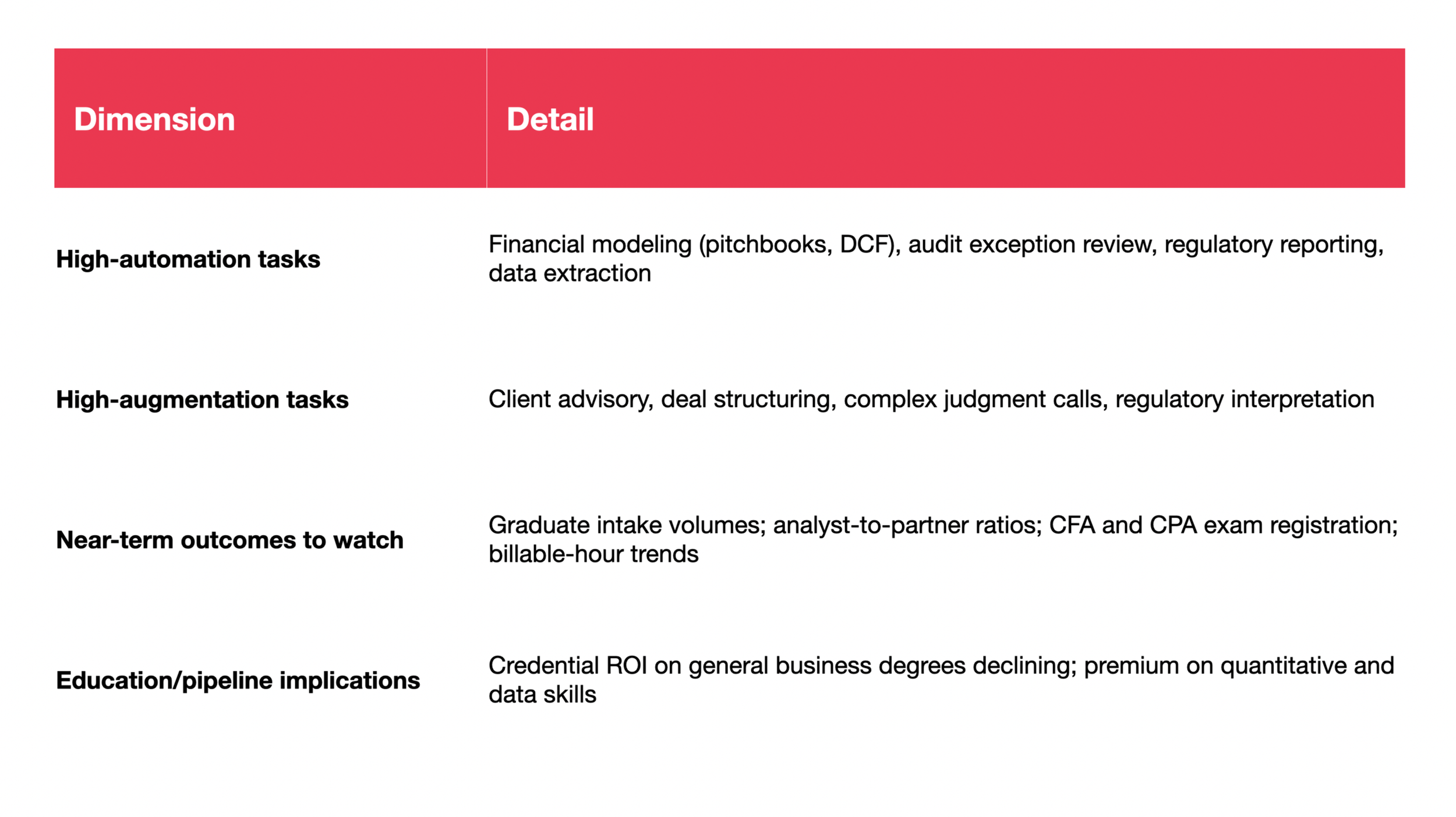

Sector 3: Finance and Business Operations

Finance's staffing pyramid is explicitly hierarchical. If the analyst tier thins, the pipeline problem compounds visibly within a single organizational structure (Massenkoff & McCrory, 2026). Reconfiguration in finance would appear as stable partner headcount alongside declining analyst intake—the pyramid becoming a diamond, with the base narrowing before the top notices.

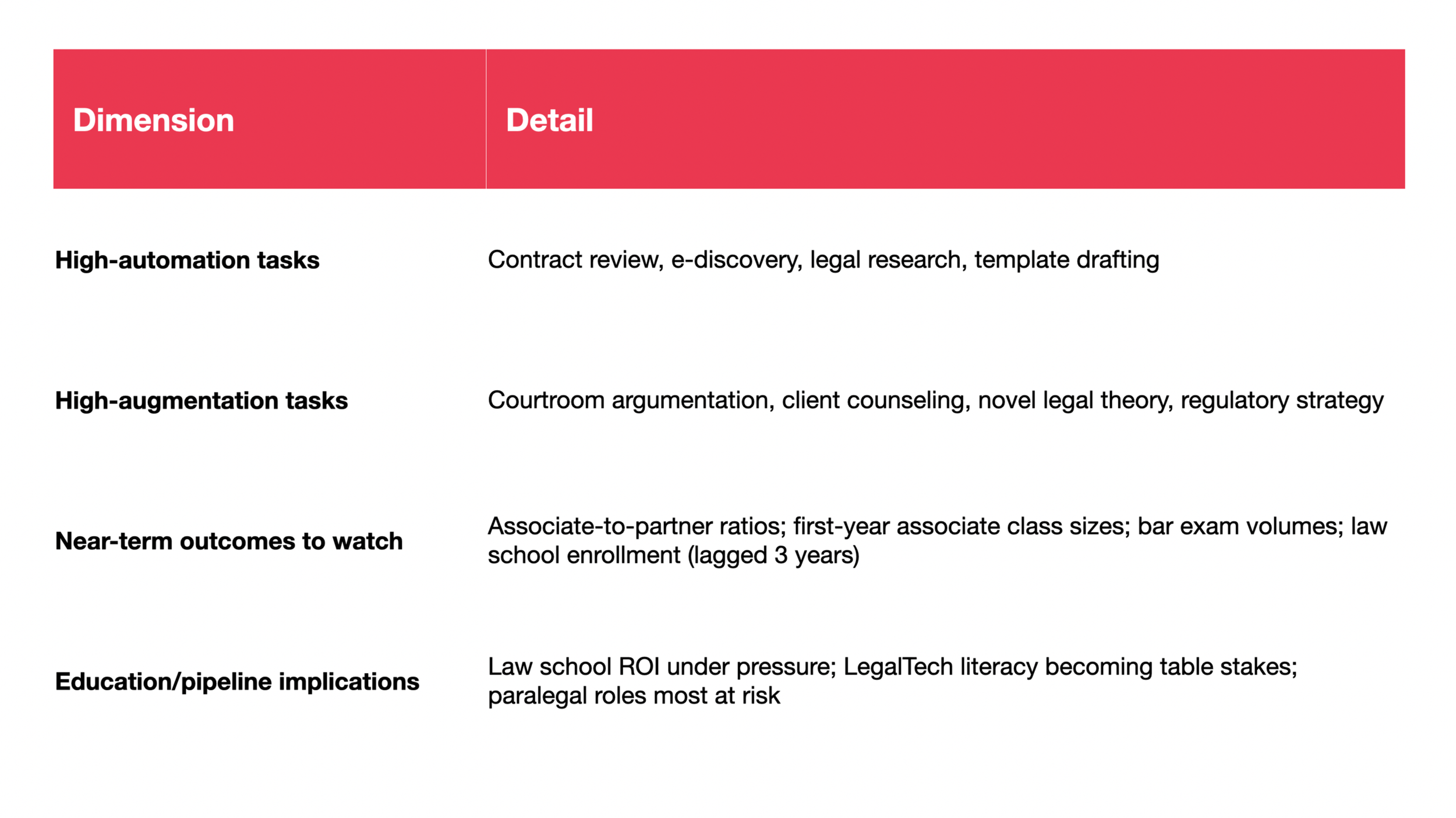

Sector 4: Legal Services

Law is a natural experiment in deployment constraints: the same tasks that are technically automatable may be slowed by ethical obligations, regulatory complexity, and liability regimes (Massenkoff & McCrory, 2026). Reconfiguration would look like stable attorney employment alongside the quiet elimination of paralegal and junior associate tasks—the work still done, but by fewer people aided by systems rather than by teams.

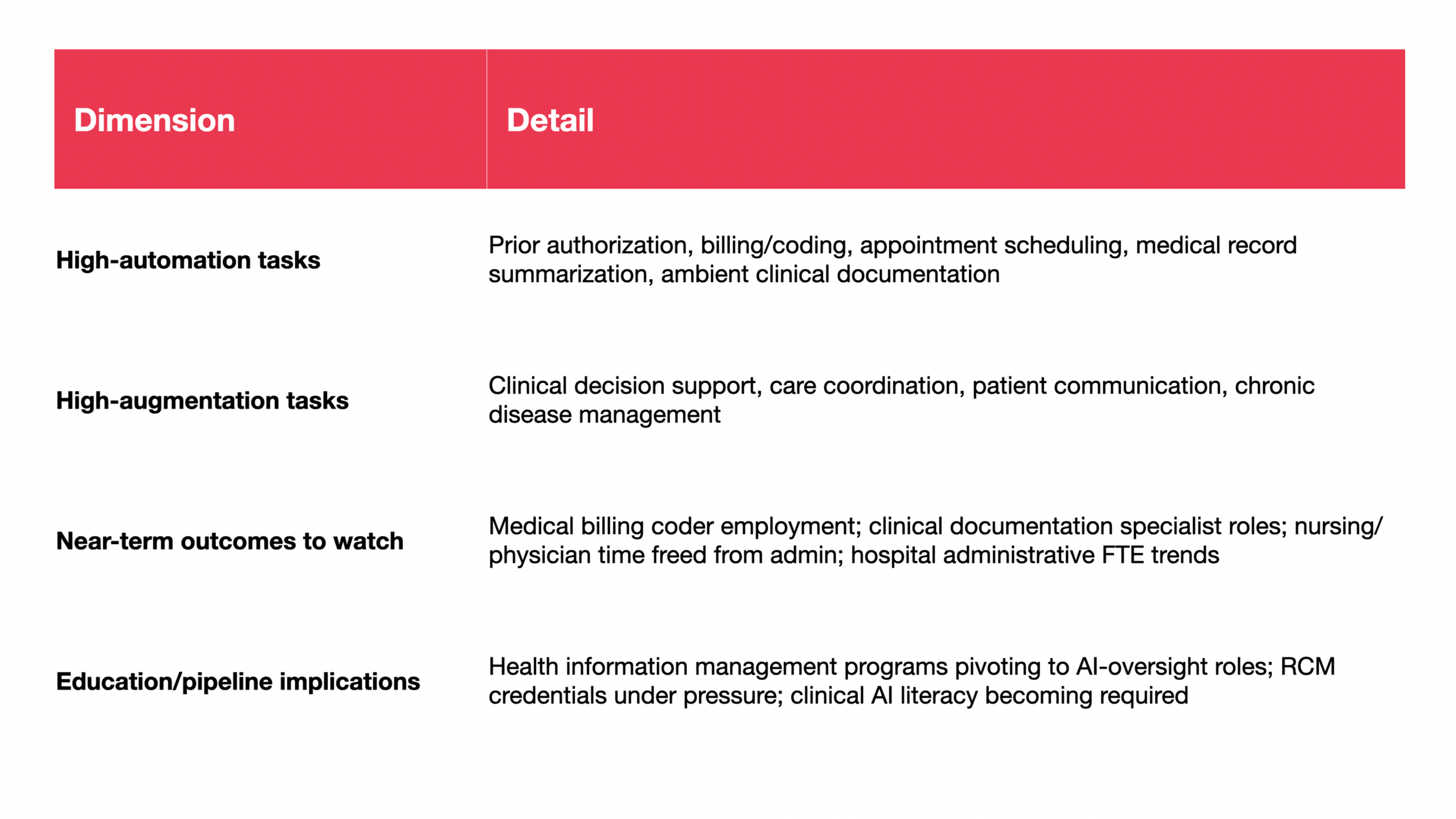

Sector 5: Healthcare Administration

Healthcare administration has the clearest institutional split between augmentation-dominant clinical work and automation-leaning administrative workflows. Governance is moving quickly, including under the EU AI Act. Reconfiguration would manifest as stable clinical headcount alongside declining administrative FTEs—the hospital's cost structure changing without its clinical capacity being visibly affected.

Co-Intelligence: A Framework for Living Between Copilot and Autopilot

In Ethan Mollick's framing, -co-intelligence- names an emergent partnership in which humans and AI systems jointly contribute to knowledge, decisions, and creative outputs—without either becoming fully subordinate to the other (Mollick, 2024).

The Jagged Frontier and the Temptation to Delegate

Mollick and collaborators describe AI capability as a -jagged technological frontier-: an uneven boundary where tasks that look similar to humans fall on opposite sides—AI is a performance booster on one side and a failure generator on the other (Dell'Acqua et al., 2026). In a BCG field experiment with 758 consultants, AI increased productivity inside the frontier but reduced correct solutions outside it. The signature risk is that it works well enough, often enough, to invite uncritical delegation.

Three Modes of Collaboration

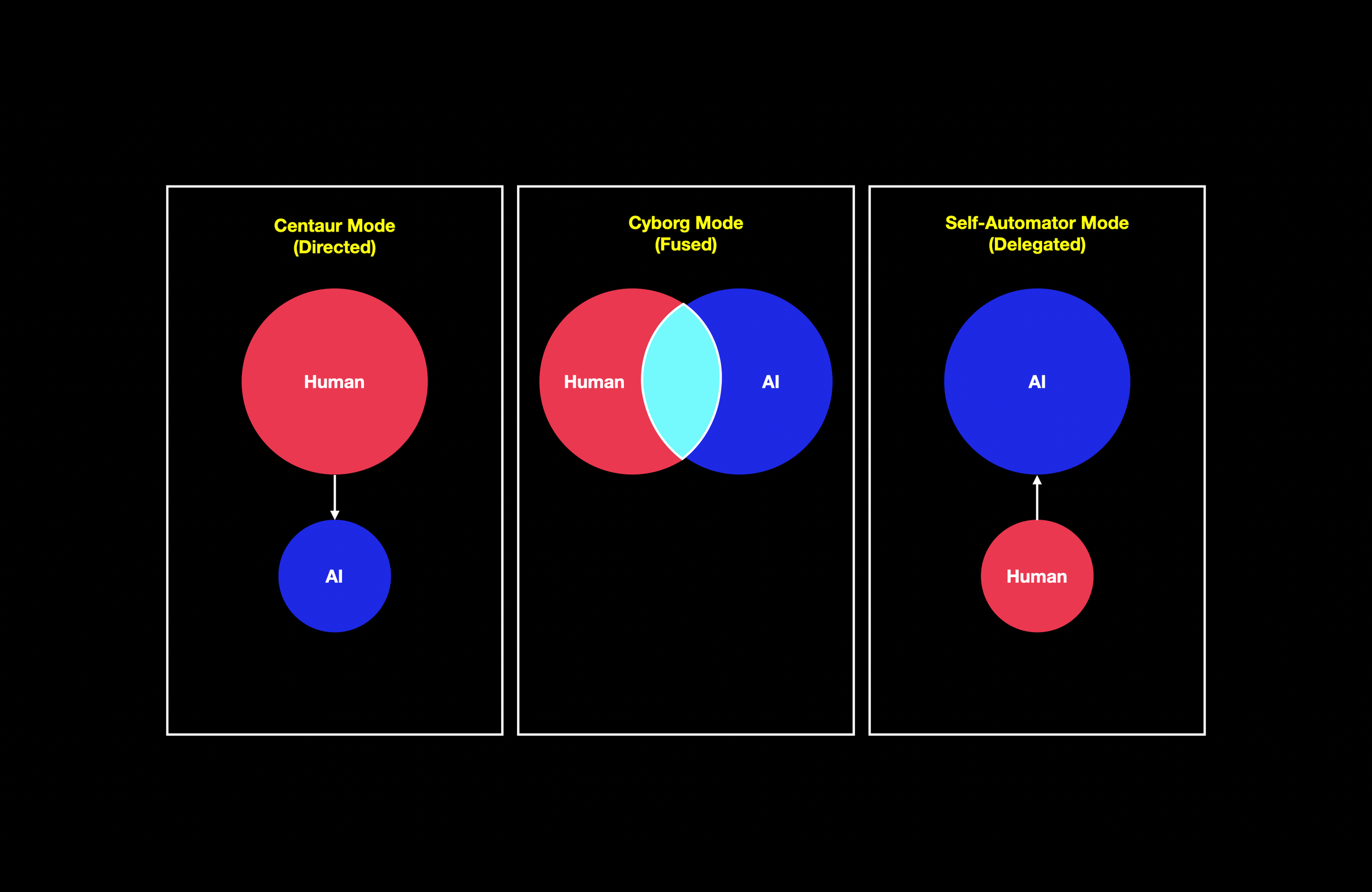

A 2025 HBS working paper identifies three recurring patterns (Randazzo et al., 2025):

> In Centaur (directed) mode, the human remains the primary epistemic agent. This mode is associated with genuine upskilling in domain expertise.

> In Cyborg (fused) mode, human and AI outputs are deeply intertwined. This mode is associated with "newskilling"—acquiring GenAI-related capabilities—but carries the risk of role ambiguity.

> In Self-Automator (delegated) mode, AI plans and executes autonomously while the human disengages. Self-Automators gain neither domain expertise nor AI skill. The organization gets outputs; the worker stops developing.

These modes are not moral categories; they are design choices.

Copilot Versus Autopilot, Reinterpreted

A copilot contract typically encourages Centaur and Cyborg modes. An autopilot contract creates structural pressure toward Self-Automator mode. The near-term question is whether organizations default into self-automation patterns—or deliberately build workflows that preserve judgment formation.

Failure Modes That Matter for Labor

Automation bias, error compounding, and pipeline collapse are the three failure modes that break skill formation. The Self-Automator mode is precisely the one associated with no skilling. If this becomes the default, the long-run system loses the ladder that replenishes expertise.

Governance Principles: The Political Economy of Delegation

Two principles matter most. First, responsibility must follow autonomy. Second, audit trails and escalation paths are the architecture of safe delegation.

Kate Crawford argues that AI is an infrastructure of extraction—of energy, labor, and data—and that its deployment can concentrate power in the institutions that own and operate it (Crawford, 2021). Her central question—“What is being optimized, and for whom, and who gets to decide?"—is a necessary corrective. For autopilot services, disclosure, auditability, and human escalation paths are conditions for legitimacy, not optional trust features.

A third principle: preserve learning loops. The objective is not to protect tasks for their own sake; it is to protect judgment formation.

Futures of Labor: Four Scenarios, Not Predictions

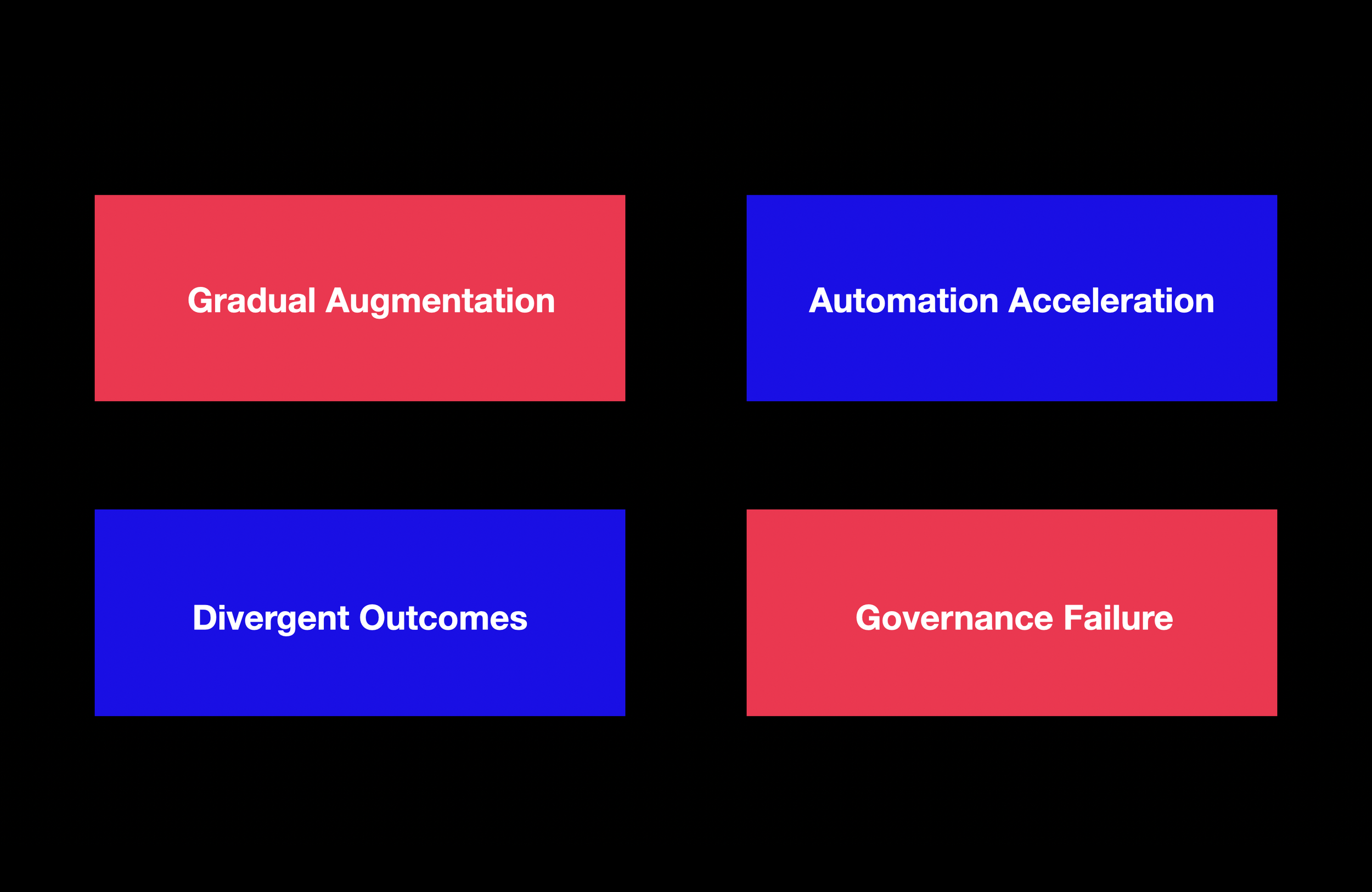

> Scenario 1: Gradual Augmentation

Augmentation remains dominant. Entry-level roles are redesigned rather than eliminated. Junior roles are restructured around Centaur-mode work. The dominant outcome is augmentation, with reconfiguration limited to skill-mix changes within preserved roles. Leading indicators: stable entry rates; persistent augmentative usage patterns; investment in redesigned junior roles.

> Scenario 2: Automation Acceleration

Competitive pressure tips the fork toward automation. The decline in job starts deepens. Within three to five years, cohort thinning produces a visible talent shortage. The dominant outcome is replacement at the entry tier, with reconfiguration propagating upward as the pipeline thins. Leading indicators: widening entry-rate gap; rising gross margins in AI-first services; declining enrollment in exposed programs.

> Scenario 3: Divergent Outcomes

Large firms invest in augmentation; smaller firms adopt autopilot services or fall behind. The result is a widening gap along multiple dimensions—firm size, educational attainment, geography. This scenario is arguably the most probable because it requires no single dominant trend—only the uneven distribution of organizational capacity that already characterizes most economies. The dominant outcome is reconfiguration everywhere, but its form differs: augmentation-led reconfiguration in well-resourced organizations (pipeline preserved but altered), replacement-led reconfiguration in under-resourced ones (pipeline broken). Leading indicators: growing productivity variance across firm sizes; divergent entry-rate trends by firm size or region.

> Scenario 4: Governance Failure and Trust Collapse

Absent enforceable governance, high-profile failures erode trust. Regulatory backlash slows adoption broadly, including where augmentation would have been beneficial (Crawford, 2021). The dominant outcome is stalled reconfiguration—neither the augmentation nor the automation branch proceeds effectively, and the system loses both the productivity gains of adoption and the pipeline preservation of deliberate design. Leading indicators: AI-driven discrimination incidents; regulatory enforcement actions; declining enterprise confidence.

What Would Change the Analysis

The core thesis rests on empirical conditions that could be falsified:

Unemployment among highly exposed workers rising by more than two percentage points, sustained over three or more quarters.

The entry-rate decline reversing cleanly with interest rate normalization (Massenkoff & McCrory, 2026).

Agentic AI systems demonstrating reliable end-to-end completion of complex professional tasks at scale.

New task creation accelerating fast enough to offset displaced tasks.

Implications: What the Signals Demand

> For Builders and Investors

The wedge—copilot or autopilot—is not merely a product decision; it is a labor-market decision. The durable moat in AI-first services is the operational system: verification architecture, exception-handling pipeline, escalation protocol, and first-party data flywheel. The moat is in the workflow, not the weights.

> For Policy and Education

The first harm is a hiring-rate signal, not an unemployment signal. The appropriate response is pipeline preservation: redesigning junior roles around supervised AI collaboration. Assessment must shift from testing AI-replaceable outputs to testing the quality of human–AI collaboration (Randazzo et al., 2025; Dell'Acqua et al., 2026).

The minimum conditions for legitimate AI deployment include disclosure, auditability, contestability, and human escalation (Crawford, 2021).

Conclusion: The invisible Reconfiguration

This essay began with a claim about sensors: that the dominant instrument—unemployment—is poorly matched to the phase of disruption we are in. The evidence supports that claim. The disruption is not a wave of layoffs. It is a quiet narrowing of the pipeline by which the next generation of cognitive workers enters the labor market.

The governing fork is the essay's central analytical contribution. The same AI capability can produce augmentation, replacement, or reconfiguration—and the difference is not in the model but in the operating model, the contract form, the governance architecture, and the institutional choices that determine how deployment proceeds. Of the three, reconfiguration is the most likely near-term outcome and the hardest to see.

The harm channel that matters most is not mass unemployment but pipeline collapse—the thinning of entry-level cohorts whose absence will compound over time into a shortage of the very expertise that AI systems cannot yet replace.

The signals are legible. The fork is open. The question is whether organizations, policymakers, and educators will treat the choice between augmentation, automation, and reconfiguration as a deliberate design problem—or whether they will allow competitive pressure and financial incentive to close the fork by default.

References

AI-First Services Roll-Ups Report (2026). AI-First Services Roll-Ups: A New Venture Category Takes Shape. March 2026.

Atlee, T. (2004). Co-intelligence: The new science of organizational development.

Crawford, K. (2021). Atlas of AI: Power, Politics, and the Planetary Costs of Artificial Intelligence. Yale University Press.

Dell'Acqua, F., et al. (2026). Navigating the Jagged Technological Frontier. Organization Science.

Firstminute Capital (2024). AI-enabled services is the next great venture category.

Massenkoff, M., & McCrory, P. (2026). Labor market impacts of AI: A new measure and early evidence.

Mollick, E. (2024). Co-Intelligence: Living and Working with AI. Portfolio/Penguin.

Randazzo, S., et al. (2025). Cyborgs, Centaurs and Self-Automators. HBS Working Paper.

Saper, J., Nahar, R., & Emergence Capital (2025). The AI-Native Services Playbook.